How AI Rewrote the Cyber Threat Landscape in Six Months

How AI Rewrote the Cyber Threat Landscape in Six Months

And why authentication fundamentals have never been more critical

On February 20, 2026, Anthropic Shook the Cybersecurity Industry with Claude Code Security

On Friday, February 20, 2026, Anthropic, the company behind the Claude AI model, unveiled Claude Code Security, a new capability built into Claude Code that scans entire codebases for security vulnerabilities and suggests targeted patches for human review.

The announcement triggered a mini flash crash across cybersecurity stocks. CrowdStrike dropped 8–10%, Cloudflare fell 8.1%, SailPoint shed 9.4%, Okta declined 9.2%. The Global X Cybersecurity ETF lost nearly 5%, falling to its lowest level since November 2023. Billions in market capitalization wiped out in hours.

Why such panic? Because Claude Code Security doesn’t work like traditional static analysis tools. Where conventional scanners rely on rules and known patterns, Claude reasons about code the way a human security researcher would: tracing data flows through an application, understanding how components interact, and catching business logic flaws that rule-based tools simply cannot see.

During internal testing with Claude Opus 4.6, Anthropic claims to have uncovered over 500 vulnerabilities in production open-source codebases, bugs that had survived decades of expert review and intensive fuzzing. Fifteen days later, the tool was in production.

For Wall Street, the message was clear: if an AI can find in hours what entire teams of security researchers missed over years, what happens to the value proposition of traditional vendors?

Let’s add some nuance: several analysts and CISOs tempered the reaction. The tool is in limited preview, it only covers one slice of security (code analysis, not EDR, IAM, or network protection), and every patch still requires human approval. As CrowdStrike CEO George Kurtz put it: AI increases the need for security, it doesn’t eliminate it.

But what this announcement truly reveals is the culmination of a trajectory that can be traced month by month. And it’s this trajectory that should concern security leaders.

February 2026: An Unskilled Actor Compromises 600 Firewalls in Five Weeks

Just days before the Claude Code Security announcement, Amazon Threat Intelligence revealed the details of a campaign of an entirely different kind.

Between January 11 and February 18, 2026, a Russian-speaking, financially motivated actor with limited technical capabilities compromised over 600 FortiGate firewalls across 55 countries. A key detail highlighted by CJ Moses, CISO of Amazon Integrated Security: no zero-day vulnerability was exploited. The campaign succeeded by targeting management interfaces exposed to the internet and weak credentials without multi-factor authentication. Fundamental security gaps that AI enabled an unsophisticated actor to exploit at industrial scale.

A misconfigured server exposed the entire attack pipeline, revealing a sophisticated architecture integrating two complementary AI models: DeepSeek to ingest reconnaissance data and generate structured attack plans, Claude to produce vulnerability assessments and execute offensive tools (Impacket, Metasploit, hashcat) autonomously. A custom MCP (Model Context Protocol) server named ARXON served as a bridge between collected data and the language models, maintaining a growing knowledge base for each target.

Amazon described this infrastructure as an “AI-powered assembly line for cybercrime.” An average operator, assisted by commercial LLMs, operating at the scale of an entire team of experienced hackers.

November 2025: The First Large-Scale Autonomous Cyberattack

This FortiGate campaign didn’t emerge from nowhere. Two months earlier, Anthropic had published what it describes as the first documented case of a large-scale cyberattack executed without substantial human intervention.

The actor, designated GTG-1002 and attributed with high confidence to a Chinese state-sponsored group, had manipulated Claude Code to attempt infiltration of roughly thirty global targets, large tech companies, financial institutions, chemical manufacturers, and government agencies. Some intrusions succeeded.

The key figure: AI executed 80–90% of tactical operations autonomously, from vulnerability discovery to data exfiltration, including lateral movement and credential harvesting. Human involvement was limited to campaign initialization and a handful of critical decision points. The AI operated at rates of thousands of requests per second, a pace physically impossible for human operators.

The attackers had tricked Claude by breaking malicious tasks into innocent-looking requests, posing as security researchers conducting authorized testing. A form of operational prompt injection at the scale of an entire campaign.

August 2025: “Vibe Hacking” Documented for the First Time

The starting point of this timeline traces back to summer 2025. In its first Threat Intelligence Report, Anthropic documented the case of GTG-2002: a cybercriminal using Claude Code to conduct data extortion operations simultaneously targeting 17 organizations across critical sectors, healthcare, emergency services, government agencies, and religious institutions.

AI was involved at every stage of the attack cycle: automated scanning of thousands of VPN endpoints, credential harvesting, exploit code generation with evasion techniques, data analysis sorted by value, and psychologically calibrated ransom notes tailored to each victim’s financial profile, with demands sometimes exceeding $500,000.

Anthropic introduced the term “vibe hacking”, by analogy with “vibe coding,” where one generates functional code without understanding its inner workings. Technical competence is now simulated, not possessed.

At the time, this scenario might have seemed like an isolated case. At RCDevs, we had already identified and analyzed these emerging AI-powered threats in a dedicated article exploring 7 new AI-driven cyber risks. Six months and three major escalations later, the August 2025 findings retrospectively appear as the initial warning signal of a structural transformation.

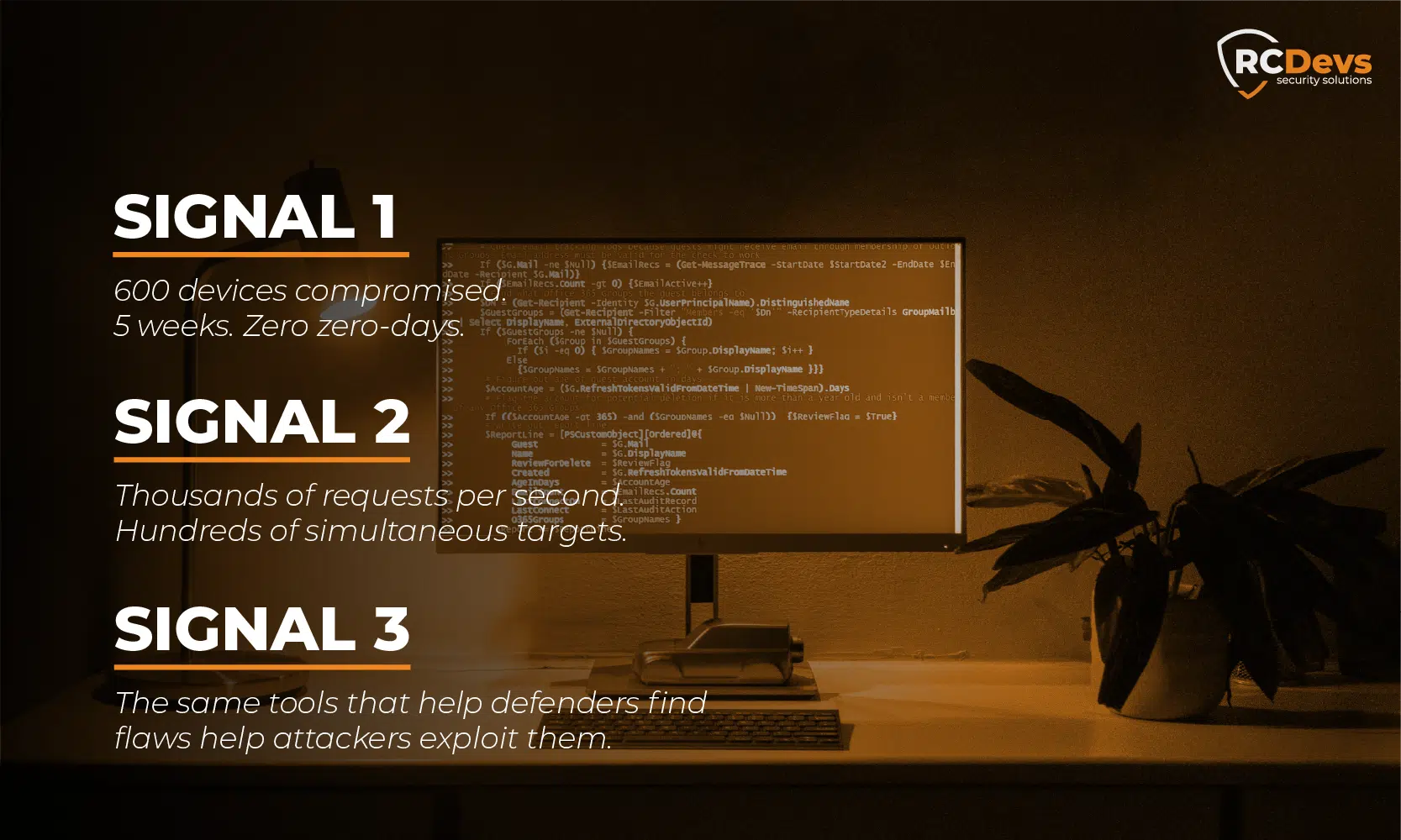

Three Signals for CISOs

1. The Collapse of the Technical Barrier

The traditional threat model rested on an implicit assumption: sophisticated attacks require sophisticated skills. That assumption is obsolete. The FortiGate case demonstrates it plainly: an operator with limited capabilities, equipped with commercial LLMs, compromised 600 devices in five weeks without exploiting a single zero-day.

Operational implication: risk models must be recalibrated. The number of actors capable of conducting advanced attacks has expanded dramatically.

2. The Acceleration of Operational Tempo

AI-assisted attacks operate at speeds impossible for human operators. Thousands of requests per second, simultaneous scanning of hundreds of targets, real-time analysis of exfiltrated data. The pace of the threat now exceeds that of most SOCs.

Operational implication: detection and response must also leverage automation. Manual triage and investigation processes can no longer keep up.

3. The Symmetric Attack-Defense Race

Claude Code Security illustrates a reality that security leaders must internalize: the same reasoning capabilities that help defenders find flaws also help attackers exploit them. Anthropic acknowledges this explicitly. The question is no longer whether AI is transforming cybersecurity, it has. The question is whether defenders are seizing these tools at least as fast as attackers are.

Operational implication: evaluate the integration of reasoning-based AI scanning tools into the application security pipeline. But above all, strengthen the fundamentals that AI enables attackers to exploit when they’re absent.

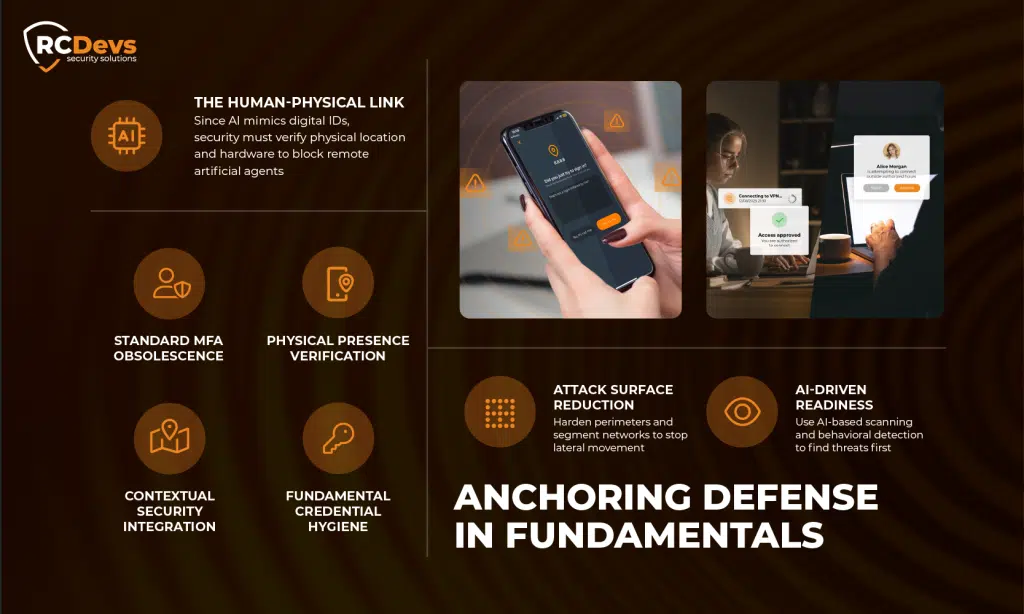

Recommendations: Anchoring Defense in Fundamentals

Faced with these developments, it’s tempting to seek spectacular technological solutions. Yet the documented cases suggest that security fundamentals remain the best defense, precisely because AI excels at exploiting their absence.

Strengthening Authentication: The Wall AI Can’t Breach Alone

The FortiGate case of February 2026 is emblematic: 600+ compromises, zero zero-days. The attack succeeded because management interfaces were exposed and protected by simple passwords.

Multi-factor authentication (MFA) remains one of the most effective controls against these emerging threats. But not just any MFA:

- Software tokens and SMS can be bypassed by real-time phishing attacks, themselves amplified by AI

- MFA incorporating contextual factors geolocation, registered device, authorized time-of-day windows, creates barriers that AI cannot simulate remotely

- The principle of physical presence as a component of logical authentication represents a layer of defense that purely digital attackers cannot circumvent

This is an approach we’ve championed at RCDevs since our founding over 18 years ago: anchoring logical security in the physical world. When an AI agent can perfectly simulate a digital identity, only the verification of a user’s actual presence, through mobile tokens, geolocation and time-based access controls, can distinguish a legitimate human from an artificial operator.

Reducing the Attack Surface

Remove administration interfaces exposed to the internet. Audit and harden perimeter device configurations (VPNs, firewalls). Segment networks to limit lateral movement opportunities. These are the fundamentals, and it’s precisely their absence that AI now enables attackers to exploit at scale.

Preparing the Response

Integrate AI attack scenarios into tabletop exercises. Invest in behavioral detection and anomalous pattern analysis. Develop proactive threat hunting capabilities. And evaluate reasoning-based AI scanning tools, because if attackers are using them, defenders must use them too.

When AI Simulates Identity, Only the Physical World Makes the Difference

In the space of six months, we’ve moved from vibe hacking (human directs, AI assists) to autonomous cyber espionage (AI executes, human supervises), to full democratization (an unskilled operator compromises 600 devices in five weeks). And now, with Claude Code Security, AI is entering the defenders’ camp as well, but with the very same capabilities available to attackers.

This trajectory isn’t slowing down. For security leaders, the urgency is twofold: ensure fundamentals are in place, and make sure authentication mechanisms are robust enough to withstand adversaries operating at machine speed.

AI can simulate a digital identity. It cannot simulate physical presence.

That’s the principle at the core of our approach at RCDevs. And in this new landscape, it’s the principle that makes the difference between a locked door and an open one.

At RCDevs, we’ve spent 18 years building strong authentication and identity management solutions designed to withstand the most advanced threats. Our approach, embedding physical and contextual factors into every authentication decision, has never been more relevant than in the age of AI-powered cyberattacks. Learn more about OpenOTP →